Using SyncDir in a script

The SyncDir command simply takes a source and destination as the only two parameters:

SyncDir C:\Data,O:\DataBackup

If you want to synchronize permissions also, a similar command named SyncDirSecure is available:

SyncDirSecure C:\Shares,\\CanadaServer\c$\Shares

A specialized version for PST files (

Personal Storage Table)

exists in the form of the BackupPSTFiles command, which will automatically query Outlook for all user PST files

and therefore only needs a destination folder to synchronize the PST files to:

BackupPSTFiles O:\PSTBackup

The reason you need this command is that users may save PST files in various folders, making it impossible to automate

backups without a command like this. The BackupPSTFiles command will flatten the location of all PST files and see them as one big pool

as the source directory.

Refer to the Outlook page

here for context information on using the BackupPSTFiles command.

SyncDir as an app

You can use SyncDir in your own scripts or you can let the Home Screen build a Backup App for you. The last option is extremely

simple. Click the "Backup App" icon in the Home Screen under "More Wizards", answer a few questions and you have a backup app that you can either

use for user ad-hoc backup or include unattended in your existing logon scripts.

If you have already set up a FastTrack logon script, you can just include the SyncDir command directly in your script. This is explained

further down this page. The rest of this page will focus on the first option, which is to build your own custom scripts in the Script Editor,

using the SyncDir command for various purposes.

SyncDir in action

Let us have a look at using the SyncDir command in a custom script in the Script Editor.

In the movie below the script line from the header of this page is used. SyncDir is so fast that we need a substantial amount of files to

clearly see what happens, which is why some 37.000 files are put into the source directory in the movie.

There are 350 new or changed files and the destination folder is on a 100 mbit network. The whole synchronization

process takes about 20 seconds; if there would have been no changes, the process would even drop to under 10 seconds.

In a typical backup scenario a user has much less files, and with few files typically changed,

a logon script backup would typically be a matter of a few seconds to execute with SyncDir.

As you can see in the movie, the graphical user interface of SyncDir while executing the synchronization is intended for end users.

Therefore it looks simple, but still provides a bit of insight to what's going on without confusing the

end user with too much information.

SyncDir in logon scripts

If we want to use SyncDir to backup users' documents, one problem has to be addressed first.

Hard-coding paths must at all times be avoided to ensure that scripts will work in the future.

And for this reason, it is important to always use

path functions

instead of hard-coding paths. If we wanted to back up the current user's documents to the

user's own home folder, a backup script line without hard-coding could look like this:

SyncDir [UserDocumentsDir],[UserHomeDrive]\Backup

This will resolve the two paths at run-time to get the correct paths for the current user.

If we built a simple logon script to perform a backup at logon, when laptop users come

back on the LAN and logs on, a template logon script could look like this:

Splash Welcome to the

company network,[UserFullName]

Sleep 5

Splash Backing up your

documents,Please wait...

If Portable Then SyncDir [UserDocumentsDir],[UserHomeDrive]\Backup

RemoveSplash

Set Printers=[Menu Select

printers,Printer|1. Floor left,Printer|1. Floor right,Printer|2. Floor

left,Printer|2. Floor right,Printer|Reception]

The simple logon script above is shown in the movie below. Printers would have to be

connected based on the content of the variable "Printers", but all graphical interfaces are built-in

and the SyncDir command does a complete back up.

The operation could also be performed as a logoff script, but some users never log out, so a better solution would be to perform

the quick logon backup and then put a script on the user's desktop to perform an on-demand backup.

To create a desktop on-demand backup icon, we could simply insert this simple line in the logon script,

which would create a desktop icon called "Backup My Files" on the desktop:

If Portable Then WriteFile [UserDesktopDir]\Backup My Files.fsh,"SyncDir [UserDocumentsDir],[UserHomeDrive]\Backup"

If the user deletes the desktop icon, it is simply created again at next logon. See the

logon script page for information on setting

up logon scripts.

SyncDir in logon scripts demo

Press play on the video below, if you would like to see Senior Technical Writer Steve Dodson from Binary Research International

walk you through how to set up SyncDir for laptop backups in a logon script.

Cancellation

From version 8.1, a cancellation button is shown by default on the user interface. You can remove this button by using the DisableSyncCancel command.

When you are using SyncDir for backup of users' files, you should allow your users to postpone the backup by pressing the cancel button.

A cancellation is completely safe; when the user clicks the cancel button and confirms the cancel,

all current I/O operations will flush and the overall operation is cancelled as soon as it is possible without corrupting files.

If you need to detect that a cancellation has happened in your script, you can check this with the LastSyncWasCancelled condition.

Very large files

A classic backup problem is backing up Outlook PST files,

where users typically archive their older mails. These files can be multiple gigabytes in size, making them hard to back up, but SyncDir will split

large files into smaller fractions and operate on a per-block basis instead of a per-file basis. Typical backup solutions require you to install software on

the destination computer to achieve this behavior, but SyncDir does not require this.

When files are over 50 megabytes in size SyncDir will switch to block level operation. The first time a file is copied, it will copy the entire file, but on

subsequent backups only changed blocks are copied. The user interface of SyncDir is kept simple to the end user, but the way you can tell if it is skipping

blocks, is that the status will say "Scanning" instead of "Queuing".

Internal tests on several gigabyte PST files with marginal changes show a drop to about 5-10 seconds to back up the files.

The threshold of when SyncDir uses block-level operation follows the large file threshold and can be changed

with the SetIOThreshold command (see further down).

|

Foreseeing problems

Typically only laptop computers must run a backup, as desktop users would store their files on

network shares already. To filter out desktop computers, the "Portable" condition should be used.

There are some other scenarios to consider when writing your SyncDir script line for backups.

What happens if the user logs on to another laptop? Then it would overwrite the backup files from

the other computer. To get by this, note how the [ComputerName] function was appended to the path

in the above examples to ensure that there is a backup directory for each computer that performs the backup.

Another scenario that is typically forgotten to think about until it's too late, is when a user gets the

computer re-installed. Then the user needs to restore the files, but the first thing that happens is that

the user logs on and gets all the backup files removed, as the source directory is now empty.

You can of course get by this by using the

"Ask" condition to ask the user, if they want to back up every time they log on. But this would be

annoying to the user in general, so the better solution is to use the [WindowsInstallDate] to test,

if it was actually re-installed today and then simply not do the backup.

|

So putting this together creating a more robust version, the backup script lines could look

like this. As an extra precaution, it is verified that the user has a valid home drive connected:

If Portable And [WindowsInstallDate]<>[Date] And Not Empty [UserHomeDrive]

Then

SyncDir [UserDocumentsDir],[UserHomeDrive]\Backup\[ComputerName]

End If

As an additional data safety, SmartDock can be used

to remind laptop users to refresh their backups; check

here

for more information.

Multi-threading

SyncDir will by default use 8 threads when synchronizing data. This means that potentially,

8 files can be copied at the same time. It might not seem logical that performing I/O operations in parallel

over sequential copying is faster, but it is, due to various transfer latencies.

Let's look at a single threaded approach to copying a file over a network.

First a file has to be read, while the destination awaits receiving the data. Then it will

most likely be scanned for viruses before it even leaves the source computer to travel over the

network, which again has handshaking and other latency operations. Once the data arrives at the

target computer, data will most likely be scanned for viruses again and then written to disk, while

the source computer is idle. In most cases, the virus scanner will be the bottleneck in transferring files,

resulting in idle I/O time.

When doing the processing in parallel, idle time will be reduced. If your source and/or destination

are located over multiple disks like in a SAN setup or either are solid state disks, parallel processing

will improve performance dramatically. But even with single drive

source and/or destinations, parallel processing is still faster, as modern harddisk optimizations like read-ahead

and

native command queuing

will help favoring parallel processing. The example in the next section will use a single SATA disk destination.

There are however some cases, where it is not faster to execute file copying in parallel. When files are a certain size,

the latency time drops and parallel copying will most likely slightly decrease performance. For this reason, SyncDir has

a threshold where it does not use parallel processing. By default, any file over 50 megabytes will be

queued for sequential copying, when all parallel operations are complete. If there are for instance 3 files of 600 megabytes

in a set of 1000 changed or new files, the 997 other files are copied in parallel first and at the end, the 3 large files

are copied sequentially. The optimal threshold in any given scenario depends on the source and destination hardware,

but 50 megabytes is considered a relatively optimal threshold in most scenarios. This threshold (and number of threads used)

can be tweaked, if desired - see further down for more details.

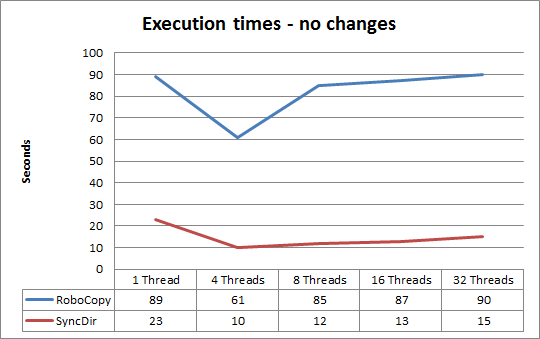

Performance test with multi-threading

So what is the actual performance difference between single threaded and multi-threaded synchronization?

The answer is: That depends on how much data is actually changed, as will be demonstrated in this lab test.

To get a clear picture of performance with various thread settings, we will be performing tests

using this setup:

Source structure:

Total size: 6GB

Files: 40.000

Directories: 12.000

Network: Gigabit

|

Source:

Disk: OCZ Vertex Solid State 100GB

OS: Windows 7 64-bit

Hardware: Intel i5 2.2ghz laptop

Virus scanner: Microsoft Security Essentials 2.0

|

Destination:

Disk: WDC Serial-ATA 2TB 7200rpm

OS: Windows 2008 Server R2 64-bit

Hardware: Intel Core 2 Duo 2.67ghz server

Virus scanner: McAfee Virusscan 8.7.0i

|

To get comparable data of the SyncDir performance in relation to other sync tools, performance data for the

same tests with RoboCopy will be overlayed in the charts.

The most interesting thing about synchronizing data is how long time it takes to synchronize few or no changes, because this is

the most typical scenario in real life. If you are using SyncDir for a logon script, the most relevant parameter is how fast few changes can be identified

and copied, because a user typically changes few files from one backup to the next. Let's see how SyncDir and RoboCopy will

do with various thread settings with only a handful of files changed (lower is better):

In this scenario it becomes clear that performance is considerably better when number of

threads is at least 4 over just one thread; SyncDir can now sync 40.000 files and 12.000 directories in about 10 seconds.

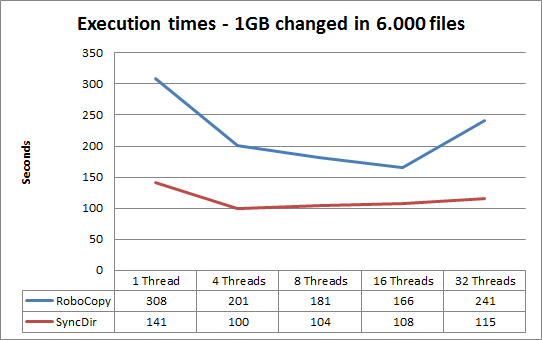

Now let's see what happens, if we remove every 1 in 6 files from the destination structure:

Using at least 4 threads is still faster than using just one thread. This is because much time

is still used on various latencies, so operations in parallel still outperform a single threaded

execution. Using over 16 threads, there is no longer a performance gain in this setup for either, as the graph

flats out or even climbs when increasing threads. However, if the destination was a SAN, increasing threads will in

most cases produce an even further drop in execution time.

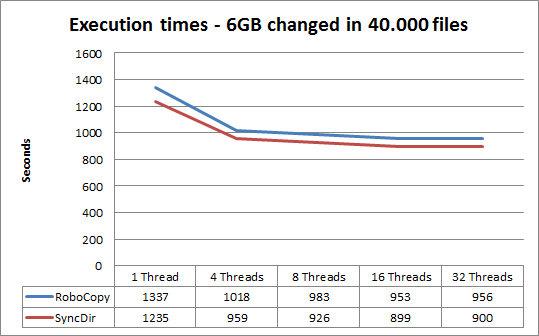

Let's see what happens, if the destination is removed completely,

meaning that all files and directories would have to be copied:

Now the difference between 4 or more threads comes closer to using just one sequential thread, because most of the execution time

is now spent reading and writing files - and therefore SyncDir and RoboCopy now perform close to identical.

Tweaking SyncDir

By default, SyncDir will use 8 threads to copy files and use a large file threshold of 50 megabytes.

These settings can be changed by issuing a SetIOThreads and/or SetIOThreshold command before using

a multi-threaded command. The following commands will use multi-threaded execution and use the number

of threads defined with the SetIOThreads commands: SyncDir, SyncDirSecure, CopyDir, CopyDirSecure,

DeleteSubFiles and DeleteDir. Specifying 1 will disable multi-threading and

perform all operation sequential regardless of SetIOThreshold setting.

It is also possible to let SyncDir and CopyDir type operations log executions using the EnableSyncLog command.

The below example will double the amount of default threads to 16, double the default large file threshold

to 100 megabyte files and log executions to \\AcmeSrv\Admin$\SyncIO.Log:

SetIOThreads 16

SetIOThreshold 100

EnableSyncLog \\AcmeSrv\Admin$\SyncIO.log

SyncDir C:\Data,O:\Backup\Portable\Data

SyncDir versus SyncDirSecure

The different between SyncDir and SyncDirSecure is that SyncDirSecure will also synchronize permissions. As a rule of thumb,

the overhead is up to 20 percent over SyncDir. The more files that are changed or new, the less the overhead becomes. With

just a few changes in the source, the overhead becomes marginal.

There is one thing to be aware of in relation to synchronizing permissions, which is the file permissions dilemma.

If permissions have been changed on files (not folders) in the source or destination without any content changes

and without being new files, this change can only be detected by scanning permissions on all files as well as folders.

The problem with this is that scanning file permissions on all files is a very costly operation

to solve a problem that is not real. Setting explicit permissions on a single file is not best practice, because

if this file is deleted and created again, the permissions are inherited from the parent directory. Combine this with the fact

that permissions

are copied when a file is new or changed, this scenario becomes extremely unlikely and should it happen,

it is not a disaster as long as folder permission changes are detected and synchronized.

Folders are always scanned because these are parent objects to inherited permissions on files and subfolders.

For these reasons SyncDirSecure will by default not identify file permission changes for existing unchanged files.

This behavior is also default with RoboCopy; refer to

this TechNet article.

Changing the default behavior is not recommended because of the performance penalty solving a very theoretical problem, combined with the insignificance of the above scenario.

But it can be overruled with the EnableSyncRepairMode command, which will enable scanning all files for security changes also, but will

make the operation considerably slower, especially when there are little or no changes. In comparison to RoboCopy,

the default behavior is the same behavior as using the /Mir and /Sec switch with RoboCopy and enabling the Secure Repair Mode will

yield the same result as using /Mir, /Sec and /SecFix. This table shows when changes in permissions are enforced during synchronization:

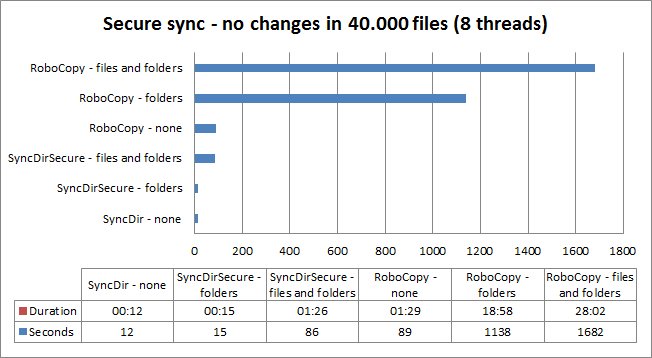

The most interesting parameter in relation to synchronization is the performance, when there are no or few changes, as this is the most typical scenario.

If we go back to the first performance chart and run the same tests, this time including synchronization of permissions,

it becomes clear that scanning files for changes in permissions will dramatically increase the execution time.

Excluding files and directories from synchronization

Directories and files or patterns matching wildcards can be used before issuing

a SyncDir or CopyDir command. The following commands are available:

|

Exclude a single file:

|

SyncExcludeFile

|

|

Exclude one or more file patterns:

|

SyncExcludeFiles

|

|

Exclude a single directory:

|

SyncExcludeDir

|

|

Exclude one or more directory patterns:

|

SyncExcludeDirs

|

|

Clear all exclusions:

|

SyncExcludeClear

|

The exclude rules are accumulated and kept throughout the lifetime of the script,

unless a SyncExcludeClear command is issued to clear the rules. Let's take a look

at a common example with SyncDir:

If Portable And UserOnceADay Then

SyncExcludeFiles *.mp3,*.avi,*.mpg,*.iso,*.exe

SyncDir [UserDocumentsDir],[UserHomeDrive]\Backup\[ComputerName]

End If

Once a day all the files in the user's documents directory are backup up to a the user's home directory.

But to preserve space, a set of well-known file types are excluded like music, movies

and pictures. The computer name is included in the destination to avoid accidental deletion of data,

in case the user has more than one portable computer or gets a replacement.

There also two commands called SyncIncludeFile and SyncIncludeFiles. These do the opposite, which means that

it includes files only that match, which could

be useful for example when backing up PST files and all other file types must be excluded.

Extracting information from last synchronization

After successful completion of a SyncDir, SyncDirSecure, CopyDir or CopyDirSecure operation, data about

the operation can be extracted, which could be useful for logging or showing information to

the end user. These functions are available:

|

Number of files in the source structure:

|

LastSyncFileCount

|

|

Number of directories in the source structure:

|

LastSyncDirCount

|

|

Number of new files created in the destination structure:

|

LastSyncNewFileCount

|

|

Number of new or changed files created in the destination structure:

|

LastSyncChangedFileCount

|

|

Number of new directories created in the destination structure:

|

LastSyncNewDirCount

|

|

Total duration of the operation:

|

LastSyncDuration

|

|

Total size of files in the source structure:

|

LastSyncSize / LastSyncSizeKB / LastSyncSizeMB / LastSyncSizeGB

|

|

Total size of new or changed files in the source structure:

|

LastSyncChangedSize / LastSyncChangedSizeKB / LastSyncChangedSizeMB / LastSyncChangedSizeGB

|

Two collections named LastSyncFiles and LastSyncChangedFiles are also available to

get a list of total and changed files respectively.

SyncDir application

The example below is an application that will ask

the user for a source and destination and synchronize the files from the source

to the destination location, asking to overwrite, if destination exists.

This script can be pasted directly into the script editor, by selecting

the "Backup Assistant" menu item from the editor menu "Documentation -> Insert Example Script".

Note that the version extractable from the script editor uses CopyDir instead of SyncDir to

avoid accidental deletion of files during testing.

Set Source=[BrowseForFolder Select backup source]

If [Var Source]<>"" Then

Set Dest=[BrowseForFolder Select backup destination]

If [Var Dest]<>"" Then

If [DirFileCount [Var Dest]]>0 Or [DirSubDirCount

[Var Dest]]>0 Then

If Not Ask "The destination directory [Var Dest] is not

empty. Would you like to overwrite?" Then Exit

End If

''==== EXEC SYNCHRONIZATION ===

SyncDir [Var Source],[Var Dest]

''==== BUILD SUMMARY TEXT INTO A VARIABLE ===

Set Question = "Backup complete:[Return][Return]_

Duration: [LastSyncDuration][Return]_

Files: [LastSyncFileCount][Return]_

Dirs: [LastSyncDirCount][Return]_

New Files: [LastSyncNewFileCount][Return]_

Changed files: [LastSyncChangedFileCount][Return]_

New dirs: [LastSyncNewDirCount][Return]_

Size: [LastSyncSizeMB]MB[Return]_

Changed size: [LastSyncChangedSizeMB]MB[Return][Return]_

Would you like to see changed files?"

''==== SHOW SUMMARY AND ASK TO VIEW CHANGED FILES ===

If Ask [Var Question] Then List "Changed files",[LastSyncChangedFiles]

End If

End If

Once backup is completed, the script shows a summary of the synchronization.

Answering "Yes" will show a list of changed or new files, as shown to the right.

This example application could be modified to your users' needs. The script could then be available

on a public network location to have users self-service themselves with backups. The script could also

be compiled into a single exe file and be given to your users, to allow them to back up their data

on other locations. Please refer to

this

page for information on how to compile your script into an exe file.